Police Telematics & Fleet Tracking Software

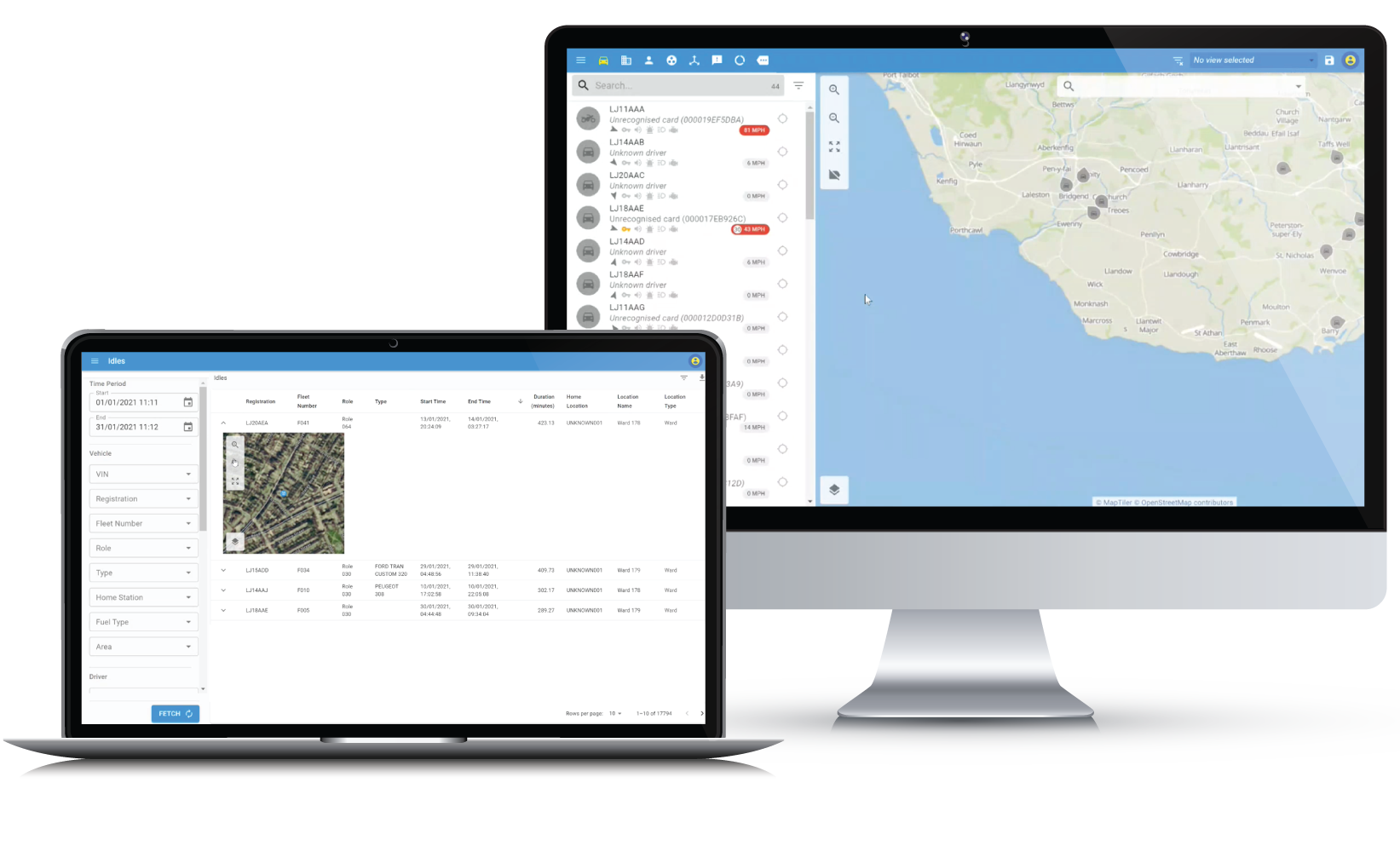

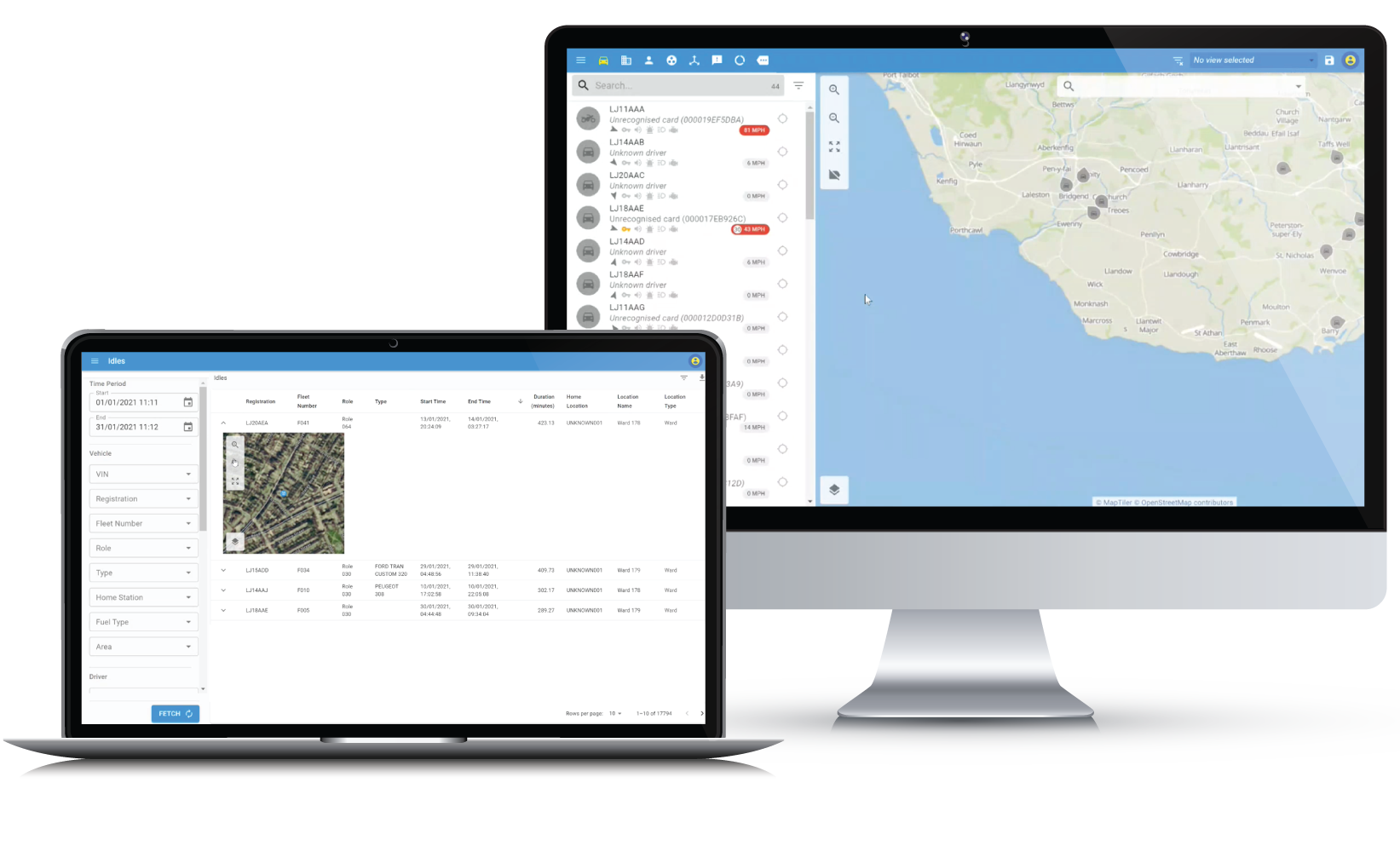

Lightbulb Analytics provides fleet management software that enables users to optimise their fleet operations. Lightbulb achieves this by providing features such as telematics, live tracking, vehicle status, fleet optimisation analysis, vehicle and personnel utilisation, driver behaviour, and collision analysis. It’s a solution built for the police, with police expertise.

Police Telematics and Fleet Tracking Software

Lightbulb Analytics provides fleet management software that enables users to optimise their fleet operations. Lightbulb achieves this by providing features such as telematics, live tracking, vehicle status, fleet optimisation analysis, vehicle and personnel utilisation, driver behaviour, and collision analysis. It’s a solution built for the police, with police expertise.

Optimise your fleet without compromising operational efficiency

- Identify surplus vehicles within the fleet

- Calculate confidence levels to determine how much or little risk to take in optimising the fleet

- Conduct scenarios to showcase how subtle changes can provide significant savings

- Reduce overall fleet costs, typically by up to 20% without compromising fleet effectiveness

- Statistical analysis of how many vehicles are required at specific locations to carry out core work

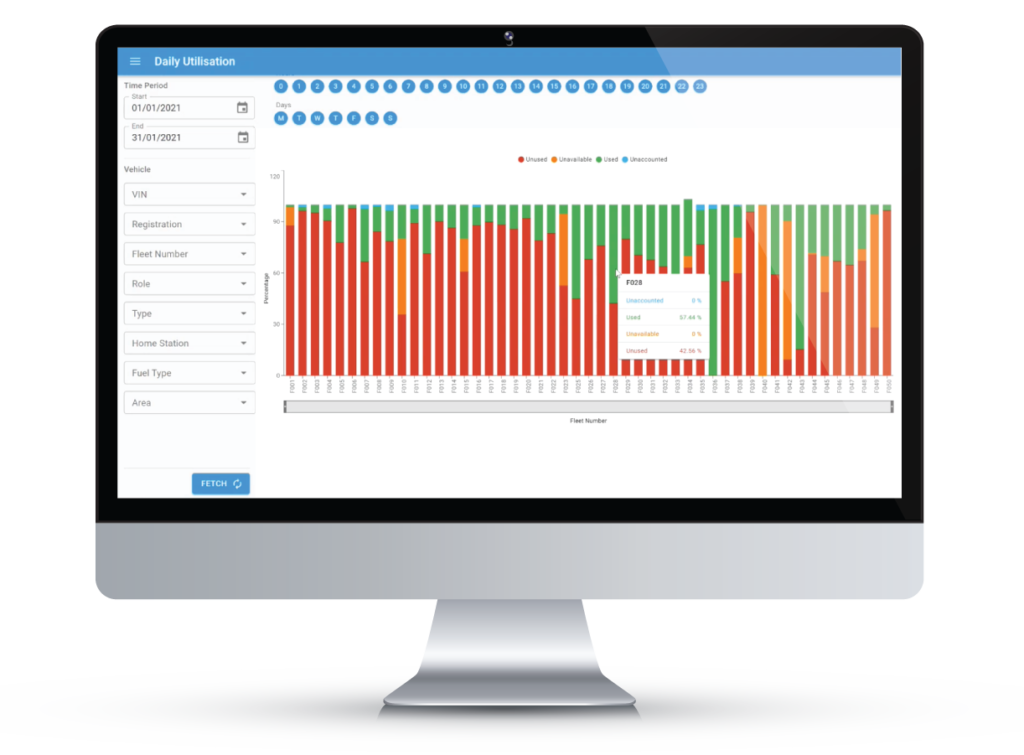

Increase fleet productivity and utilisation

- Customised reporting provides an accurate analysis of actual vehicle utilisation

- Highlight how vehicles are being used to ensure vehicle usage is effective

- Identify fleets which are under/over-utilised so they can be either re-distributed or removed

- Analyses idling at base, workshops and elsewhere giving analysis on the time spent idling and potential cost and environmental savings

Ensure duty of care with the best driver behaviour

- Reduce accidents and potential vehicle damage

- Customised reporting and league tables to motivate and educate drivers in an engaging format

- Identify drivers who require refresher training, to ensure training is targeted

- Clear analysis of harsh braking, acceleration and cornering and compliance with speed limits

- Reduce costs such as fuel usage, vehicle maintenance and repair and insurance premiums

Deploy resources effectively with confidence

- Ensures the right resources, with the right skills, are deployed effectively

- Optimise resources by identifying which resources can be deployed without needing to make radio requests

- Retrospectively analyses how resources have been deployed to assist in setting patrol strategies and response expectations

- Reports on deployment: time spent, resources committed, demand, locations etc.

A fleet of 1,000 vehicles typically saves:

Want to learn more?

Reach out to our team of experts for more information.

Creating Lightbulb Moments

At our core, we are a software development & data analytics business with over 20 years’ experience in the industry. We strive to provide our clients with “Lightbulb” moments, enabling them to make strategic business decisions based on intelligent data analysis.

Lightbulb Analytics (LBA) are one the UK’s leading providers of telematics driven data analytics. Our analysis is utilised by a number of UK Police Forces and private sector businesses to ensure maximum efficiency and optimal performance of their resources.

But don’t just take our word for it, read about how we’ve helped our clients:

Client experienced an 18% improvement in driver behaviour within 90 days of proactive reporting

On average we reduce overall fleet costs by 20% without compromising operational efficiency

On average we increase resource time in working environments by 22%

We are secure, trustworthy, and certified.

Lightbulb Analytics has successfully achieved the ISO 27001 Information Security Management Accreditation and the ISO 9001 Quality Management Accreditation. Our clients deserve the best and can continue to benefit from the best practice of the ISO – International Organization for Standardization. And we have the Cyber Essentials Plus accreditation.

Contact Us

Please fill out the below form for more information or to schedule a demo.

We take security seriously and will never share your email address with third parties.